August 24, 2015

Book explores future of developmental robots

CARBONDALE, Ill. – Understanding how babies learn is crucial to building robots that can think and make decisions for themselves.

CARBONDALE, Ill. – Understanding how babies learn is crucial to building robots that can think and make decisions for themselves.

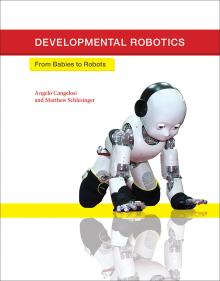

A new book, “Developmental Robotics: From Babies to Robots,” co-authored by Matthew Schlesinger, associate professor of brain and cognitive sciences in the Department of Psychology at Southern Illinois University Carbondale, explains why.

The book, released this year as part of the Massachusetts Institute of Technology’s Intelligent Robotics and Autonomous Agents series, has been well-received by peers in the field, who refer to the book as “a source of inspiration,” a “fascinating review,” and “a very timely and convincing message in support of interdisciplinary research.” The co-author is Angelo Cangelosi, professor of artificial intelligence and cognition at the Centre for Robotics and Neural Systems at the University of Plymouth, England.

Developmental robotics refers to researching and designing robots capable of thinking independently. Such robots theoretically could solve problems on their own, without designer intervention, mimicking human intelligence. Developmental robots must be able to observe and learn from their environments, and they must be able to use experience to make decisions, just like humans.

That’s a big reason why developmental robotics designers take inspiration directly from the discipline of developmental psychology – the study of how human babies and children develop and learn to think. One important theory Schlesinger and Cangelosi describe in their book, and the reason why human appearance is common in developmental robotics, is “embodied cognition,” learning through physical interaction with the environment.

“Babies learn with their bodies as well as with their minds,” Schlesinger explained. “They see, they touch, they taste, they hear. The way they interact with the environment is affected by the form of the human body.”

It makes sense, then, to build robots that interact with the environment in a similar way as children. A robotic model known as iCub is one of the most advanced developmental robots. iCub is a toddler-sized, humanoid robot designed to interact with and explore its environment like a toddler might. The robot has 53 motors that move its head, arms and hands, waist and legs. It can see and hear and has a sense of touch and feel. The iCub model is learning toddler-esque behaviors, such as playing catch, dancing to music and playing games.

For iCub to learn on its own, it must have the capability to explore. The first step is learning how to move and to absorb data from the available senses. For example, Schlesinger explained, a baby learns several things from the act of making noise, including how to make different noises, how to hear the noises, how it sounds when the self-made noises stop and how the noises influence the environment. A developmental robot learns its physical capabilities in similar fashion.

In addition to interacting with the environment, developmental robots need another childlike quality in order to learn, a quality Schlesinger describes as “intrinsic motivation” or simply, curiosity.

“Developmental robots are meant to explore their environments autonomously, deciding what they want to learn and what they want to achieve,” Schlesinger said. The decision-making process is part of the intrinsic motivation.

There are more than 20 iCub models in laboratories around the world, mostly in Europe. The price tag – nearly $400,000 – makes iCub a considerable investment. Only one model is in the United States. iCub simulations, however, make the technology more widely available. Schlesinger uses a simulation in his research at the SIU Vision Lab where he leads a research team that studies humans, and designs and tests computer models and robot simulations. Schlesinger’s goal with that lab is to explore how vision functions as an improvable skill, and how vision guides mastery of other motor skills such as reaching, pointing and walking.

Developmental robots inspired by our understanding of human development are in turn helping us learn more about human development. The robots offer consistency – never a guarantee with human children.

“With developmental robots, we’re better able to test theories about cognitive development,” Schlesinger said. “We are using robots and robot simulations to help study the role of movement on the learning process, the development of social learning through cooperation and sharing, even how babies acquire language,” Schlesinger said.

Developmental robots are also being used in therapy for those with developmental disorders. For example, socially assistive robots can help children with autism spectrum disorders learn social cues and how better to engage and interact with people. Similarly, a Japanese robot designed to look like a baby seal soothes elderly patients with Alzheimer’s disease. The interaction between it and patients improves socialization between patients and caregivers, and helps keep patients engaged and socially responsive.

Schlesinger’s and Cangolesi’s book concludes with an overview of achievements in the interdisciplinary research of developmental robotics, and an optimistic look to the future. The back and forth trade-off of knowledge will likely continue, with new understanding of human cognitive development leading to improvements in developmental robots. It’s a new field, full of promise and potential.

Schlesinger envisions a day when developmental robots are as common in our daily lives as Internet access and smart phones are now.

“I am convinced we are going to get to a day – maybe in as little as 20 years – when interactive machines will be all around us, when the gains from developmental robotics will have us interacting with machines and robots all the time,” he said.

The potential for increasingly independent robots – and more and more of them – naturally brings up some questions. Is it possible to raise a robot in a similar fashion to raising a child by increasing its capacity for personalized, memory-stored experience? Might independently thinking robots begin to design robots on their own, and to address and fix their own design flaws? Do developmental robots have feelings? Is there such a thing as too much artificial intelligence?

Science fiction has explored the possibilities – and consequences – of developmental robotics and artificial intelligence for decades. Recently, prominent scientist Stephen Hawking and inventor Elon Musk have publically expressed concern that artificial intelligence could outstrip our own, possibly with dire results.

Schlesinger, too, noted that a robot apocalypse, to use a term from pop culture, is theoretically achievable, though he cautioned against hitting the panic button just yet. He noted that “robo-ethics” likely will become part of the interdisciplinary field of developmental robotics.

“I’d like to get more into the philosophy of this,” he said. “What will our relationship with robots be? We may get to a point where we never even question whether a robot has feelings. We may just take it for granted.”

(Matthew Schlesinger is available for interview or comments on the subjects of developmental psychology, developmental robots, cognitive development and human-robot interaction. Contact him at matthew.schlesinger@gmail.com or matthews@siu.edu.)